|

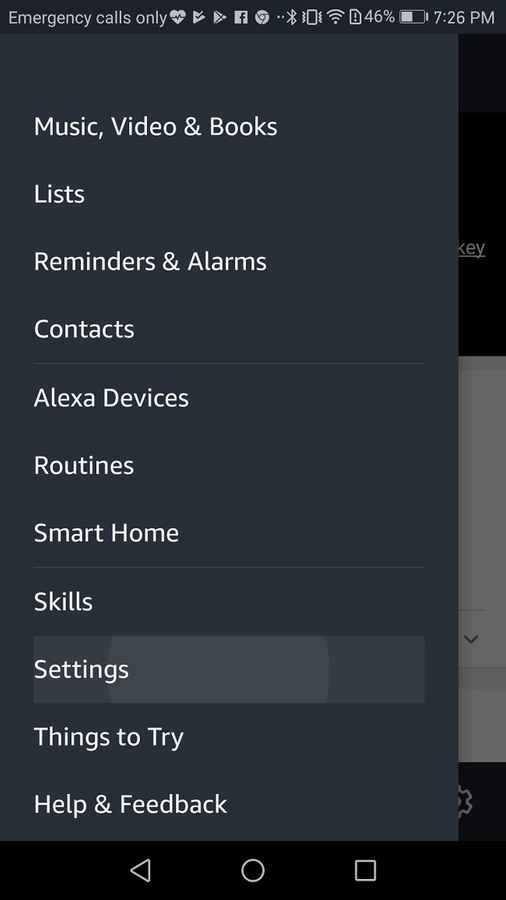

The new £20 note is being released tomorrow - here's what it looks and what you need to know about exchanging old notesĪn Amazon spokesperson has previously said: "To help improve Alexa, we manually review and annotate a small fraction of one per cent of Alexa requests.Buckingham Palace announce when Prince Harry and Meghan Markle will end royal duties.However, it's not always full conversations, it could just be little sound bites.īut there is a reason they take these recordings, even if it is without you knowing. This is because Alexa works on 'wake words' which means sometimes it can pick up false ones when you're not interacting with it and start recording. Can Alexa Record Audio Despite its use in the wake word, you should know that Alexa can record conversations, so there are ways to control Alexas behavior.

The news has come to light again after an ex-Amazon exec told BBC's Panorama special, Amazon: What They Know About Us, that he turns off Amazon's Alexa "whenever wants to have a private moment." You still should follow best practices for privacy, though, because other people around you will inevitably have at least one voice assistant in their home or on their person.There are constant conspiracy theory about whether our gadgets - from phones to tablets - are listening to us.Īnd as it turns out, Amazon's Alexa devices are actually recording us - even if we are not actively speaking to it. However, "if you are sitting up at night worrying about if your voice assistant is listening to you, maybe voice assistants aren’t for you," he said. If you're still a bit squeamish about them, that's perfectly fine.įor those split down the middle on whether or not to buy one and introduce it to your home, try easing into it by putting it in a room where the least important conversations happen, Henderson said. Go to Settings > General > scroll down to Siri > tap on Siri to deactivate it > return to Settings > Select General again > tap on Keyboard > disable the Dictation option.ĭisabling Siri will clear your voice search history as well. Quit Safari on your iPhone if you currently have it open then go to Settings > Scroll down and select Safari > click Clear History.Ģ) Clearing the Siri search history, plus deactivating Siri entirely: If you're worried about voice recordings, skip to option two.ġ) Clear all of Siri's search history, but without deactivating search entirely: Plus, Google is soon rolling out voice controls to delete data just by asking the Assistant, similar to Amazon's Alexa.Īpple's Siri: You've got two options when it comes to Apple's voice assistant, which is embedded in its various iPhone models. If for some reason you get an error message that reads "transcript not available," that means either your microphone was turned off or there was too much background noise. They are always listening, will reply Alexa to this creeptastic command.

If you say this to Amazon Echo voice assistant, she’ll assure you that ‘they’ are always watching (over) and listening in to you.

To listen to those recordings, click Details (next to the audio icon) > Show Recording to play. 1: Alexa, Ask the Listeners This one was a shocker for many Alexa users. From there, you can take a look at your past activity and any files with an audio icon will include a recording. Then, on the left navigation panel, click Data & Personalization > Activity controls > Web & App Activity Manage Activity. To review your voice transcripts and recordings from Google Assistant, navigate to your Google Account and make sure you're logged in. "Using a new voice app should be approached with a similar level of caution as installing a new app on your smartphone." "Users need to be more aware of the potential of malicious voice apps that abuse their smart speakers," the authors of the study wrote.

These are phishing attempts to get user passwords and not legitimate asks from Amazon or Google. In other even more malicious cases, a skill may tell a user that an update is ready and that Alexa or Google Assistant needs to hear the user's password to install it. That means anything said near the speaker at that time will be recorded without the user's knowledge and sent to the developer. However, developers sometimes include up to a full minute of silence after users think the app has stopped running. Most of these were horoscope skills that seemed fairly innocuous. Researchers at the company created eight dummy apps for the platforms to illustrate possible hacking scenarios. This month, German security consulting firm Security Research Labs discovered a serious flaw in voice assistant security: voice data on your Google Home and Alexa devices can be hacked into through third-party apps, or skills. If it's impossible to get away from devices that record audio, it's important to understand what could go wrong, in theory, to make the best choices possible to protect yourself.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed